Artemis I is taking off very soon (I hope, because as I’m sending this issue there are some technical difficulties). I don’t know about you, but I’m hugely excited to see it happen! Lately, I have been thinking about starting a series of robotics coffee breaks with no fixed topics, just a virtual space to hang out without any particular agenda. If you have any thoughts about this idea, please don’t hesitate to let me know! As usual, the publication of the week section is manned by Rodrigo. Last week’s most clicked link was the Xiaomi’s latest humanoid robot, with 9.5% opens.

NASA Live: Official Stream of NASA TV

Onwards and upwards! Artemis I is launching around 2 PM CEST (8 AM Eastern time). Artemis I is the first in a series of moon exploration flights that should end with human boots on the moon by mid-decade. The spacecraft will orbit the moon and collect scientific data in this mission. You can learn more about the mission on NASA’s website, and for the overview of the science missions, I highly recommend this piece by Jatan’s Space.

Practical Tips for Writing Robotics Conference Papers that get Accepted

In this video, milfordrobotics provides a good set of tips on writing papers. I highly recommend this video, especially if you are starting as a robotics scientist!

Debrief: The Reddit Robotics Showcase 2022

Reddit Robot Showcase took place end of last month. This article contains links to all the sessions covered throughout the event.

Knife Throwing Machine Gets The Spin Just Right

What better way to bond with your child than building a knife-throwing machine? Quint’s device hits the target just right every time. One thing I found interesting about this build is the knife-rotating carriage that spins the projectile. The carriage needed a power input to rotate the payload, so Quint and his son built a power rail with which the carriage made contact. Their reaction to their first successful shot makes the whole video worth a watch, in my opinion.

ATOM Calibration

“ATOM is a set of calibration tools for multi-sensor, multi-modal, robotic systems, based on the optimization of atomic transformations as provided by a ROS-based robot description. Moreover, ATOM provides several scripts to facilitate all the steps of a calibration procedure”. Looking at the video embedded in the repository, I can see how some of these tools can be useful for visualizing and manipulating sensor data. For me, visualizing sensor frustums would come in handy on multiple occasions.

UW Off-road Autonomous Driving - Full Speed or Nothing

YouTube (UW Robot Learning Lab)

If you happen to be starting your day by reading this newsletter and would like to get energized, then I can’t think of a better way than watching this video of the University of Washington’s off-road autonomous Polaris, funded by DARPA’s RACER program. According to the video’s description, the team is using only onboard sensors and not using GPS or predefined maps for localization. Watching the footage, it indeed seems like a full-speed or nothing approach.

Publication of the Week - Ctrl-VIO: Continuous-Time Visual-Inertial Odometry for Rolling Shutter Cameras (2022)

As the name suggests, rolling shutter cameras record each frame using a roller that scans the scene vertically, horizontally, or rotationally. This scan can distort objects moving fast due to its line delay covering the hole frame. This paper presents a method for visual-inertial odometry (VIO) that considers the distortion caused by the shutter. The algorithm uses both B-splines to model the frame-by-frame movements and does online calibration to get the scan line delay. The method was accurate and robust but still uses a lot of processing power, making it hard to run in real time. Results and videos are available at this link.

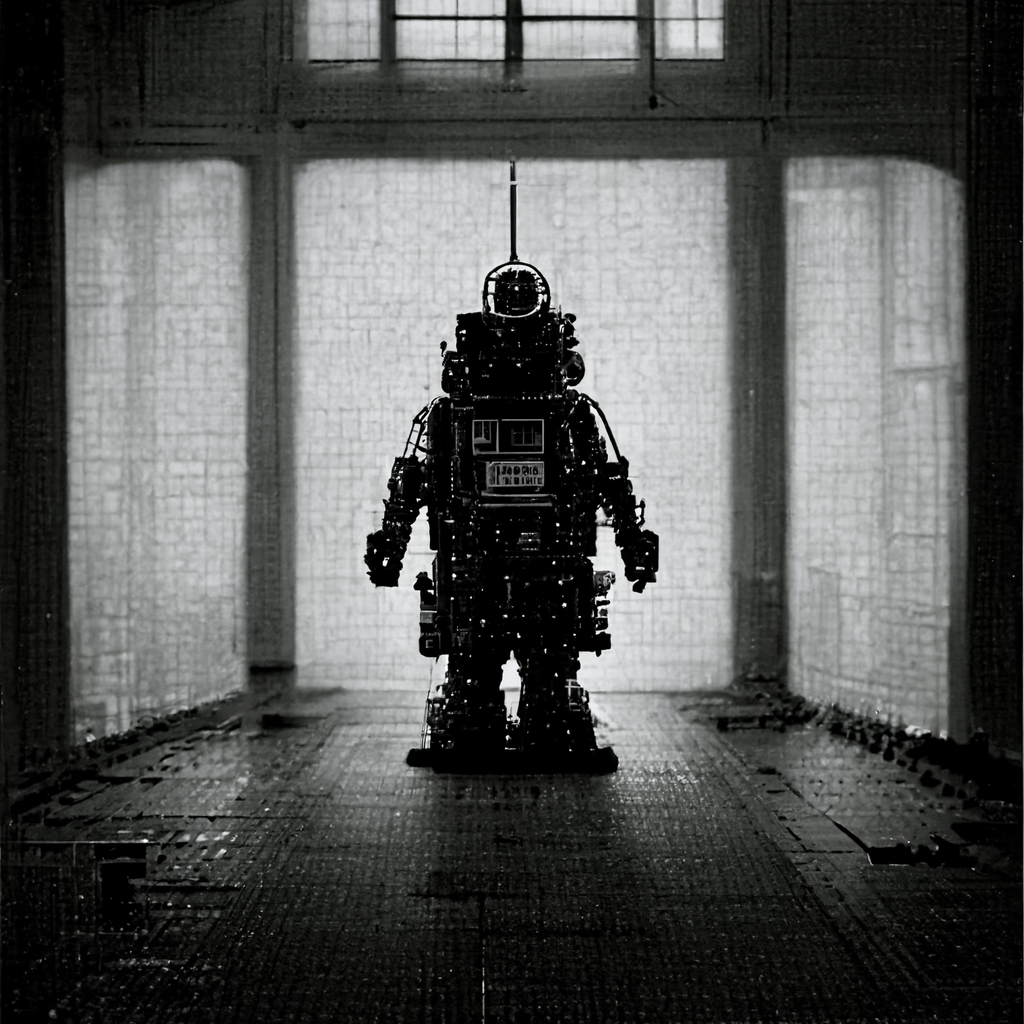

AI Image of the Week