Today, I'm in travel mode, so let's get to the point and look at some robots (and children's toys)!

Outside the Bounding Box: VLA Segmentation for Temporal Consistency & Reduced Data Drift [Sponsored]

What if you could automate video segmentation, cut annotation time, improve temporal consistency, and scale datasets for VLA models? On Dec 9th Encord’s ML experts will give away how to:

- Eliminate manual labeling with automated workflows

- Boost annotation speed & accuracy using SAM 2

- Label over time for temporally aware datasets

- Integrate outputs directly into robotics pipelines

A VLA that Learns from Experience

I am actually mind-blown by these results from Physical Intelligence. Their pi0.6 model combines VLA with recovery and some reinforcement learning, achieving 90%+ accuracy on some tasks. The paper also reports a task count per hour, which I believe is a powerful metric for the usefulness of a setup like this.

A Dynamic Robot That Can Throw, Catch, and Hit a Baseball

Researchers at RAI created a low-impedance robot platform and equipped it to throw balls and hit them with the bats. They also made them buddy up and practice together. I really like that lots of the research coming from RAI is so eye-catching, making for nice robotics content.

Low-Latency Event-Based Velocimetry for Quadrotor Control in a Narrow Pipe

This might have gone unnoticed, but I just came across this video from the UZH Robot and Perception Group, which created an event-based motion capture system for their drone that boasts 1.5ms latency (!!). The work involved using event cameras for smoke velocimetry, and the end result is quite interesting.

LocoTouch: Tactile Sensing Systems for Four-Legged Robots - YouTube

To push the boundaries of robotic capability, researchers in the Department of Mechanical Engineering at Carnegie Mellon University in collaboration with The University of Washington and Google Deepmind, have developed a new tactile sensing system that enables four-legged robots to carry unsecured, cylindrical objects on their backs. This system, known as LocoTouch, features a network of tactile sensors that spans the robot’s entire back. As an object shifts, the sensors provide real-time feedback on its position, allowing the robot to continuously adjust its posture and movement to keep the object balanced.

2025 PX4 Developer Summit Talks

The talks from the PX4 Developer Summit 2025 are now out, and you can find them in this playlist. I highly recommend checking them out! I quite enjoyed the talk on throwing drones out of the window.

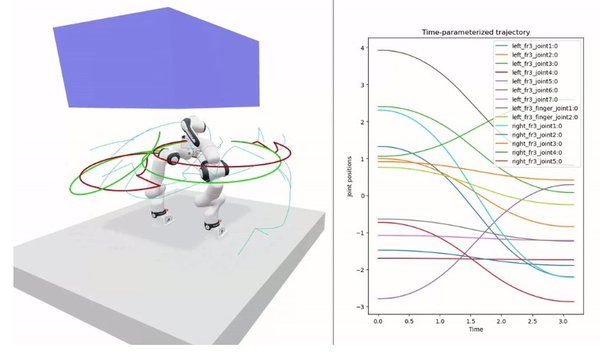

roboplan

It looks like we have a new kid on the block in the trajectory planning sector. Roboplan is an open-source motion planning library based on pinocchio.

I Built a Synth for My Daughter

Not really robotics, but I found this too fun of an idea not to share. Very cool idea and a nice execution!

Events

- Automated Guided Vehicle (AGV) Session, ICCMA 2025: Nov 24 - Nov 26, 2025 (ddl: Aug 20, 2025). Paris, France

- European Robotics Week: Dec 03 - Dec 04, 2025. Tallinn, Estonia

- iREX 2025 — International Robot Exhibition: Dec 03 - Dec 06, 2025. Tokyo, JP

- ROSCon India 2025: Dec 18 - Dec 20, 2025. Pune, India

- FOSDEM 2026: Jan 31 - Feb 01, 2026. Brussels, Belgium

- Embedded World 2026: Mar 10 - Mar 12, 2026. Nuremberg, Germany

- European Robotics Forum: Mar 23 - Mar 27, 2026. Stavanger, Norway

- Commercial UAV Forum: Apr 22 - Apr 23, 2026. Amsterdam, Netherlands

- Open Hardware Summit: May 22 - May 23, 2026. Berlin, Germany

- ICRA 2026: Jun 01 - Jun 05, 2026. Vienna, Austria

For more robotic events, check out our event page.

Want to promote your product or service in Weekly Robotics? Check out our advertising options.